Unleash your Android E2E tests full potential with Flank

(Thx to @CaunterEmma for the EN proofreading, @mhidaka and @tahia910 for the JP proofreading, and the Test Online group for their support and providing 🍻)

Few weeks ago, I gave a public presentation about Flank (my first time in public + IN JAPANESE). It was quite a challenge for me!

In order to fully prepare, I’ve decided to write a blog post about my experience using this wonderful tool, which both reduces the waiting time for running UI tests and offers new opportunities, thanks to the way it was implemented.

Test Lab

If you’re already using Android E2E (end-to-end, aka UI tests) tests, you probably already know Firebase Test Lab.

It’s a Google service providing access to on-demand virtual or real devices, in order to run a variety of tests. For traditional Android development, mainly Instrumentation tests (= Espresso and UI Automator) and Robo tests (no code) are supported.

With Test Lab, you use a YAML based configuration providing you with access to multiple device types (Samsung, Sony, etc) or API versions (from 19 to the latest ones) in order to run your tests.

Traditional testing with gcloud

The default way to use Test Lab is through its gcloud integration, which is the command line application provided by Google in order to access various Google Cloud services (because Firebase is one of them).

You don’t even need any YAML to start using it:

gcloud firebase test android run \

--type instrumentation \

# From ./gradlew app:assembleDebug

--app app-debug-unaligned.apk \

# From ./gradlew app:assembleDebugAndroidTest

--test app-debug-test-unaligned.apk \

--device model=Nexus6,version=21,locale=en,orientation=portrait \

--device model=Nexus7,version=29,locale=fr,orientation=landscape

Sadly gcloud suffers from some limitations:

- all tests run sequentially; this is particularly annoying when considering that E2E tests usually take a few seconds depending on your needs,

- based on Python; which needs to be available locally and/or in your CI depending on your needs,

- being a multi-purpose tool, it has frequent updates; which becomes frustrating if you only use it once in a while…

All these reasons motivated me to try this new tool which I found quite some time ago, but didn’t investigate more thoroughly, since I had mistakenly thought that setting up parallel execution would be a pain.

Upgrade to Flank

Flank provides many features out-of-the-box:

- Test sharding (= parallel execution)

- YAML compatible with gcloud

- Cost reporting

- Stability testing

- HTML report

- JUnit XML

All the magic behind Flank is powered by the linkedin/dex-test-parser library, which offers a new way of orchestrating your test execution compared to the black-box approach used by gcloud.

Because Flank knows in advance all of the methods that will be run before the tests even start, it can offer new features like easy test sharding.

Also considering an Android developer or an Android friendly CI has always access to a JVM, the fact that Flank is implemented in Kotlin is allowing to easily download the latest version in Github and start using it from 1 command:

$ curl -L https://github.com/Flank/flank/releases/download/v21.03.1/flank.jar -o flank.jar

$ java -jar flank.jar

flank.jar

[-v] [--debug] [COMMAND]

--debug Enables debug logging

-v, --version Prints the version

Commands:

firebase

ios

android

refresh Downloads results for the last Firebase Test Lab run

cancel Cancels the last Firebase Test Lab run

auth Manage oauth2 credentials for Google Cloud

provided-software

network-profiles Explore network profiles available for testing.

ip-blocks Explore IP blocks used by Firebase Test Lab devices.

Easily migrate from gcloud to Flank

Flank supports the same configuration syntax than gcloud, plus some additions in a dedicated section, which is allowing to easily switch between each other.

Here is the most basic example we’re using in order to run tests in Mercari:

gcloud:

results-history-name: Flank for app_jp

timeout: 45m

record-video: true

use-orchestrator: true

test-targets:

- size large

environment-variables:

clearPackageData: true

device:

- model: beyond1

version: 28

orientation: portrait

locale: ja_JP

- model: flame

version: 29

orientation: portrait

locale: ja_JP

flank:

## test shards - the amount of groups to split the test suite into

max-test-shards: 8

## test runs - the amount of times to run the tests.

num-test-runs: 1

## The billing enabled Google Cloud Platform project name to use

project: my-firebase-project

## The max time this test run can execute before it is cancelled (default: unlimited).

run-timeout: 180m

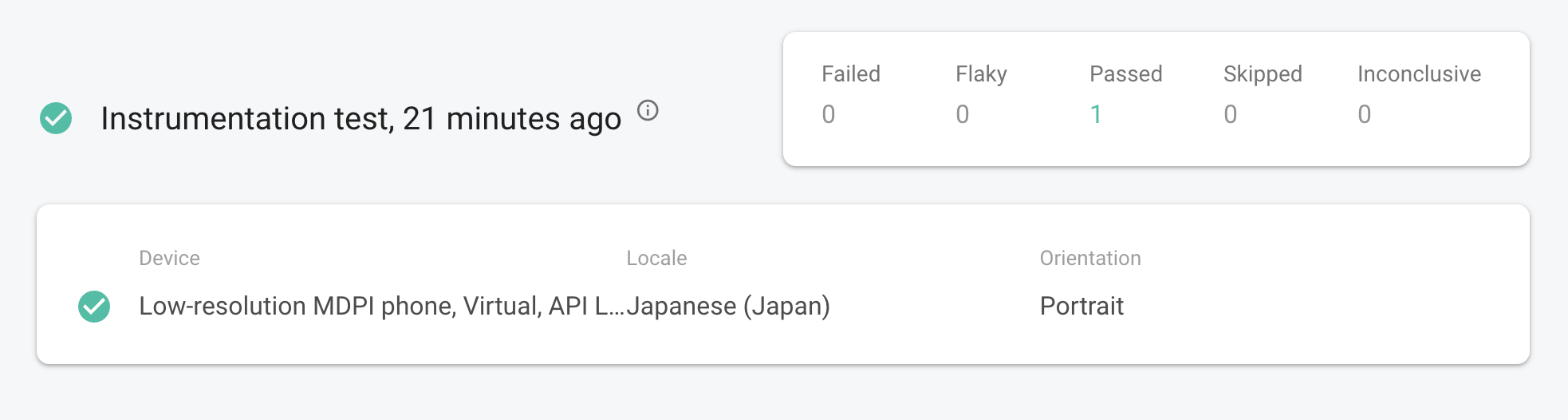

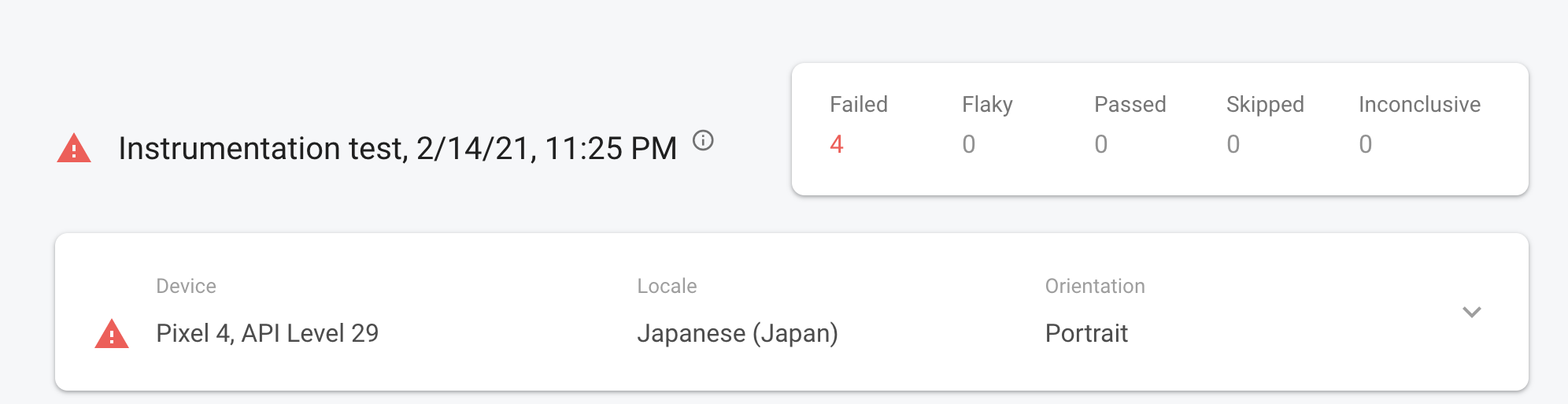

gcloud using the Test Lab Dashboard:

Same screen using flank:

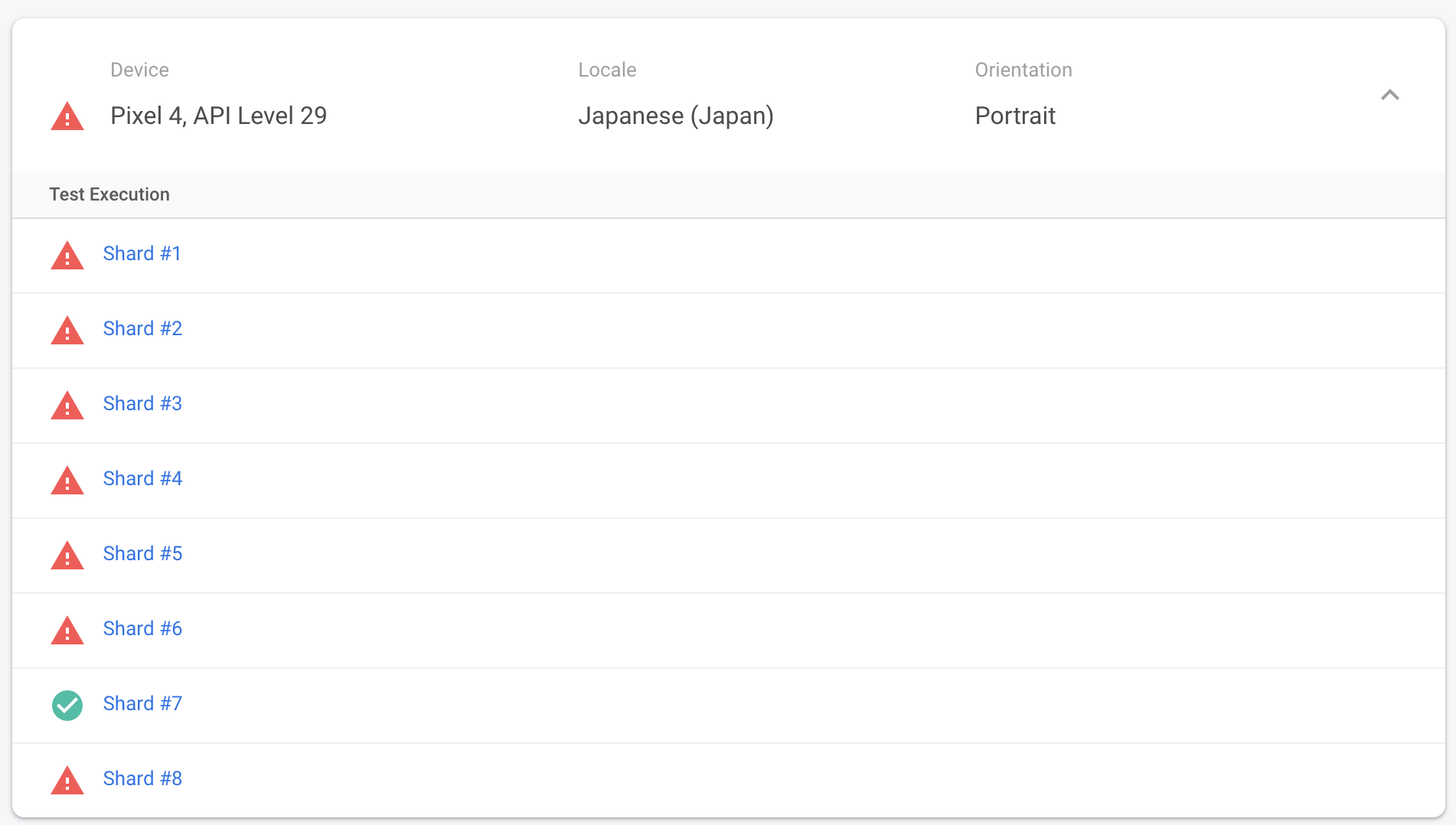

A multi-shard view powered by Flank:

From Gradle

The Gradle plugin is allowing Gradle users to configure it without having to write any YAML code 🙌

fladle {

// Required parameters

serviceAccountCredentials = project.layout.projectDirectory.file("account.json")

variant = "freeDebug"

// Optional parameters

useOrchestrator = false

environmentVariables = [

"clearPackageData": "true"

]

// etc...

That’s the solution I would choose if you’re planning to mainly use Flank locally or in a simple CI flow.

From Github actions

There’s even a provided Github Action allowing to integrate it in your existing workflows 👍

- name: flank run

id: flank_run

uses: Flank/flank@master

with:

# Flank version to run

version: v21.03.1

# Service account file content from secrets

service_account: ${{ secrets.SERVICE_ACCOUNT }}

# Run Android tests

platform: android

# Path to configuration file from local repo

flank_configuration_file: './testing/android/flank-simple-success.yml'

- name: output

run: |

# Use local directory output

echo "Local directory: ${{ steps.flank_run.outputs.local_results_directory }}"

# Use Gcloud storage output

echo "Gcloud: ${{ steps.flank_run.outputs.gcloud_results_directory }}"

The ability to use a past action outputs by referring it through its ID is something I discovered with this integration, and which is really helpful when you’re having to maintain complex workflows using several tools.

Test sharing challenges

Sadly, run your UI tests in parallel is not possible without bringing some new challenges. You need to be aware of them beforehand, otherwise you’ll probably waste your or your team-mates time when having to debug this kind of issue:

Tests dependency

This kind of issue can be illustrated by this simple scenario, showing how use a common user account across all your tests can cause unexpected issues:

- Test1 is logged with UserA.

- Test2 login with the same UserA.

- A login notification is displayed in Test1, breaking the test flow…

It could already appear when using gcloud, but because Flank can be highly parallelized, this kind of issue may break way more tests over time.

Even if this article is not aiming to give all solutions to this problem, be aware of all your test dependencies like:

- user accounts,

- backend access (and their changes over time),

- backend scalability (it will be more overloaded with multiple tests running in parallel).

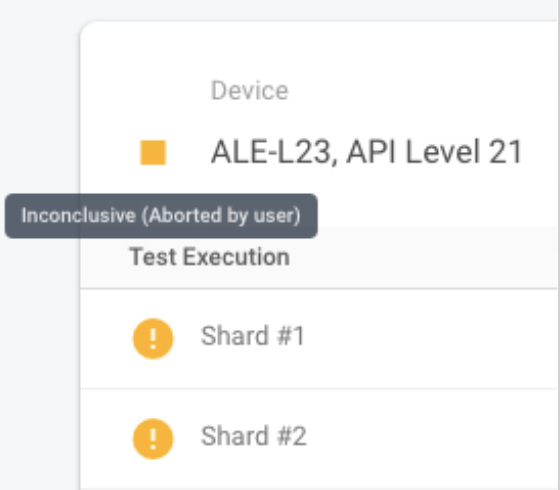

Test Lab device availability

By requesting a high number of devices, you may have to wait longer before having access to these shared resources. The waiting time is highly depending on the device you’re requesting.

In our cases, we noticed a quick shortage with a specific Samsung device which was leading our CI waiting more than 3 hours without success…

Here is an example of how this error was happening for us:

Sadly it’s impossible for now to predict when a device is available or not in Test Lab, and the latest versions of the Test Lab dashboard is recommending to contact the Firebase team in the official Firebase Slack if you’re facing this issue.

Flank on steroids with “Smart Flank”

The basic example I gave before was considering a fixed number of devices, but Flank is capable of arranging your test execution in order to minimize the number of devices you’re using, while hopefully ensuring the defined execution time you requested when configurating it.

This smart way is accessible only when leveraging some specific configurations in the provided YAML:

flank:

### Max Test Shards

## test shards - the amount of groups to split the test suite into

## set to -1 to use one shard per test. default: 1

max-test-shards: 50

### Shard Time

## shard time - the amount of time tests within a shard should take

## when set to > 0, the shard count is dynamically set based on time up to the maximum limit defined by max-test-shards

## 2 minutes (120) is recommended.

## default: -1 (unlimited)

shard-time: 120

### Smart Flank GCS Path

## Google cloud storage path where the JUnit XML results from the last run is stored.

## NOTE: Empty results will not be uploaded

#smart-flank-gcs-path: gs://tmp_flank/tmp/JUnitReport.xml

smart-flank-gcs-path: ./past-JUnitReport.xml

### Use Average Test Time for New Tests flag

## Enable using average time from previous tests duration when using SmartShard and tests did not run before.

## Default: false

use-average-test-time-for-new-tests: true

### Default Test Time

## Set default test time used for calculating shards.

## Default: 120.0

default-test-time: 15

### Default Class Test Time

## Set default test time (in seconds) used for calculating shards of parametrized classes when previous tests results are not available.

## Default test time for classes should be different from the default time for test

## Default: 240.0

# default-class-test-time: 30

As you can see, the main challenge is to have access to the previously runned Junit report, in order to use past execution results when running the same tests again.

I didn’t really consider this way of using Flank so far because we’re not having a big difference of time between our tests. And even if save and restore files between jobs in our CI is easy, I guess the multi device type setup we’re using would mean we should probably consider carefully which report to use for which execution…

But if you’re just running your tests on 1 device, it’s probably worth investigating this improvement.

Easily extend Flank with your Kotlin skills

If you already know Kotlin (it should be true because you’re probably working Android), you can easily extend Flank capabilities with some custom code, in order to add new YAML filters or any other improvements you may benefit.

Here are an example where I added a new test filter, based on our company’s test naming conventions.

Be able to extend and validate this kind of logic without having to run an Instrumented test is definitely an enjoyable experience. And the Flank codebase is not too big, allowing to quickly start hacking with it.

Conclusion

Hopefully you will not wait as long as us before migrating from gcloud to Flank, and start enjoying faster UI test execution and if you’re lucky, drop the powerful but convoluted gcloud from your toolset.

If you want to have access to my presentation slides, here are links for:

- English : https://bit.ly/ato-flank-en

- 日本語 : https://bit.ly/ato-flank-jp

Some references I used when preparing my talk:

- Flank December 2018,

- Running Android UI Test Suites on Firebase Test Lab (from SoundCloud).